A Wardenclyffe in Space: Orbital Data Centers Deserve a Chance

Why an absurd space bet could launch critical industries on Earth.

On the morning of July 4, 1917, a demolition crew arrived at the foot of the Wardenclyffe Tower. The 187-foot lattice structure was Nikola Tesla’s most ambitious and most ruinous project—the first node in a global wireless power network, funded by pitching J.P. Morgan the more digestible promise of a transatlantic communications station. When Morgan grasped the strangeness of what Tesla really intended, he pulled his money. No one else would touch it. The tower stood unfinished, the lab went dark, and Tesla slid into decades of debt and isolation.

The difficulty was that Wardenclyffe had never really belonged to the world of engineering. It was a creature of conviction. When the press demanded proof of concept, Tesla offered them instead a kind of gospel, writing that civilization’s highest calling was to harness the earth’s energy and beam it, free of charge, to every corner of the globe. Wardenclyffe was meant to be that dream’s first physical expression. But the useful irony of Tesla’s predicament was that several of his most valuable inventions—high-frequency alternating current and early wireless signaling—were developed as intermediate steps toward a goal whose premise was, even in his own time, understood to be dubious.

A Taller Tower

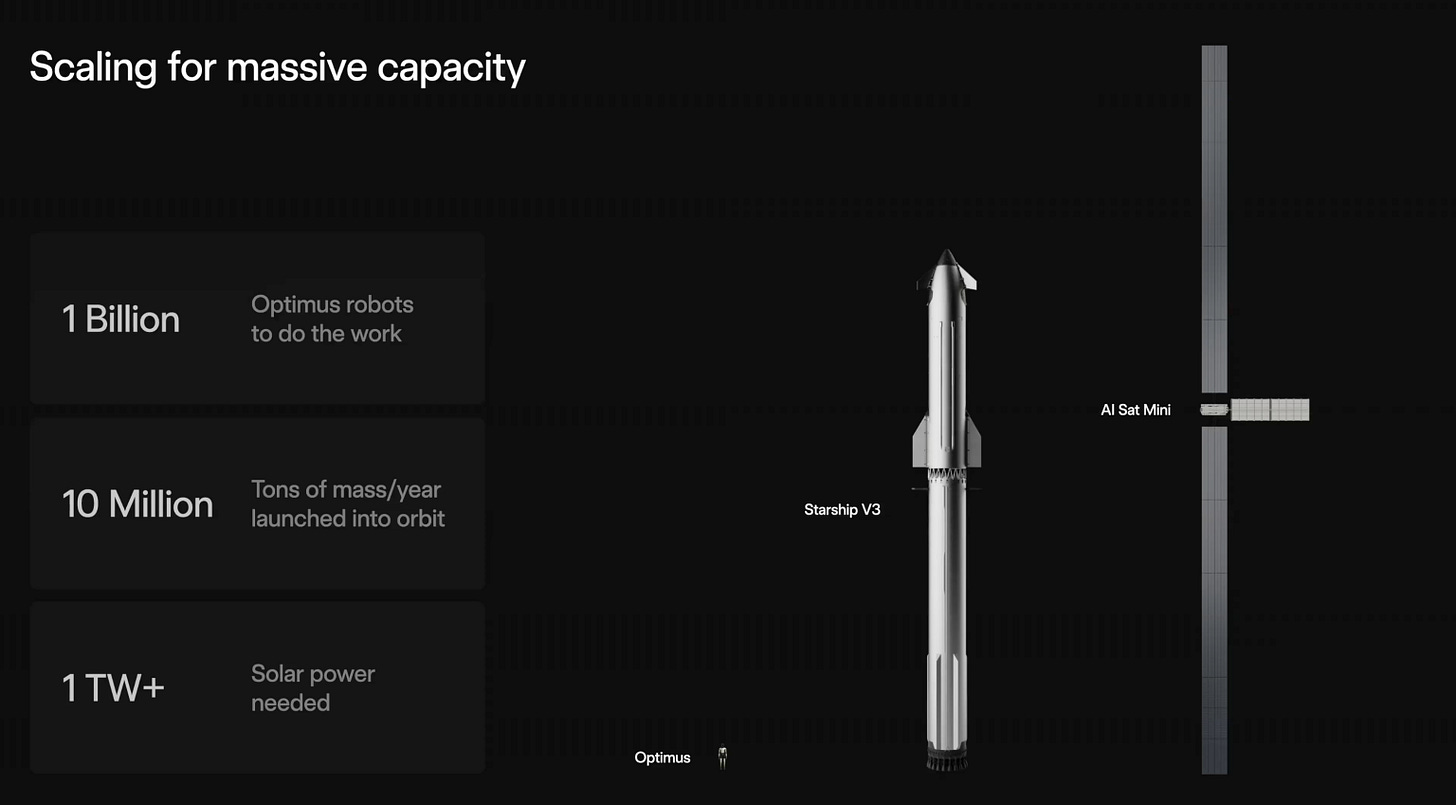

It’s fitting then that on February 5, 2026, Tesla CEO Elon Musk appeared on the Dwarkesh Podcast and declared that within 3 years, space would be the cheapest place to run AI data centers. Days earlier, SpaceX had filed with the FCC for authorization to launch an unprecedented one million satellites as orbital data centers,1 framing the effort as humanity’s “first step towards becoming a Kardashev II-level civilization–one that can harness the Sun’s full power.” The application came right as SpaceX finalized its acquiring xAI.

Much ink has already been spilled on why this is, to put it mildly, premature. The economics are punishing: although solar energy is more plentiful in space, orbital data centers are projected to cost roughly three times their terrestrial equivalents unless launch prices fall by an order of magnitude, and hardware would degrade rapidly in the unforgiving environment. The filing is silent on these questions, containing no technical specifications, no deployment schedule, and no cost estimates. But Musk has since offered a rough first sketch: a “mini” orbital compute satellite with exceptionally long solar arrays, paired with a comically small radiator, often the limiting component to prevent chips from overheating. Suffice it to say, the presentation did little to resolve the core economic and engineering doubts.

Musk’s ambitions, like Tesla’s, are largely vision-driven. For Musk, SpaceX exists to make humanity multiplanetary, and each venture along the way—Falcon 9, Starlink, and now orbital data centers—seeks to monetize intermediate steps, with each new revenue stream funding the next stage. Orbital data centers are Starship’s Starlink, a use case that could generate the cash and industrial capacity to keep climbing. And the bet may not be wholly indefensible. Terrestrial data center costs are rising fast, with over $160 billion in U.S. projects blocked or delayed by local opposition since 2023, narrowing the gap. And where Tesla had one financier and no fallback, Musk commands the world’s most valuable private company and the only operational super-heavy-lift rocket on Earth.

Our contention is that a well-resourced, ideologically-driven actor spending aggressively into a speculative frontier can generate demand signals, train workforces, and pull supply chains into existence that the market, left to its own tendencies, would never produce. This creates a “crowding-in” effect where significant upfront capital catalyzes additional activity by de-risking a sector, improving expectations, and creating complementary inputs. Even a partial push by Musk or other orbital compute projects like Google’s Project Suncatcher or the Nvidia-backed Starcloud to build orbital compute would set in motion a cascade of intermediate developments that matter regardless of whether a million satellites ever reach orbit.

The Wardenclyffe may never transmit power; what matters is what gets built on the way up.

Reshoring Precision Optics

Although Starlink already uses laser links to route internet traffic across its constellation, synchronizing computation across a distributed data center is far more demanding. This requires multi-terabit throughput, ultra-low latency, and far more links per satellite. The number of optical transceivers required, and the performance demanded of each, would be orders of magnitude beyond what Starlink currently possesses.

The United States cannot currently manufacture these at scale. The most intricate and expensive segment of photonics manufacturing—packaging and assembling individually fabricated optical components into a finished module—has largely been offshored, with Asia holding the majority of the global manufacturing footprint. In 2025, every transceiver in a Google TPU pod was made in China, and roughly 70% of Nvidia’s came from two Chinese companies. Beijing has made photonics a strategic priority and sees it as one avenue to sidestep U.S. chip export controls—that is, by pairing advanced photonics with legacy hardware that isn’t restricted. Optical transceivers also run firmware, so a compromised module can spoof identity, kill links, or enable deeper intrusion. When most transceivers come from a country with a history of embedding backdoors in firmware, that poses a serious security risk.

An orbital data center buildout would force this problem open. SpaceX’s classified government contracts and the dual-use nature of its constellation effectively preclude sourcing critical components from Chinese manufacturers. Given SpaceX’s pattern of vertical integration—roughly 85 percent of components are built in-house—it would almost certainly build domestic optical packaging and testing lines. These effects would propagate through three channels:

Workforce

SpaceX functions as an extraordinarily intense training ground, and people leave. As of January 2026, SpaceX alumni have founded 141 startups that have raised over $10.6 billion, and the crowding-in mechanism is already at work: In February 2026, three former SpaceX engineers who developed optical communications links for “compute-hungry” Starlink satellites raised a $50 million Series A for Mesh Optical, a company explicitly aimed at building the largest optical transceiver manufacturing footprint outside of Asia. An orbital data center program would train optical engineers at multiples of the current Starlink workforce, dramatically increasing the pipeline for exactly this kind of spinout.

Upstream suppliers

Even at 85 percent vertical integration, SpaceX still relies on over 3,000 suppliers, so it would likely purchase specialized inputs and optical test and measurement equipment. When SpaceX creates significant domestic demand for this equipment, suppliers have reason to establish U.S. service centers, training programs, and application engineering teams. That infrastructure, once built, is available to any American company, including new entrants and startups like Mesh, at lower cost and shorter lead times than sourcing from overseas.

First-mover advantage

China’s foothold in transceiver manufacturing was established in part because Huawei pioneered linear-drive pluggable optics, a design simplification that greatly reduces cost and power consumption. The next architectural transition from what are known as pluggable transceivers to co-packaged optics is still in its early stages, and the manufacturing processes are not yet locked in. A domestic buildout that produces next-generation optical architectures could establish American dominance before the market consolidates.

Radiation-Tolerant Compute

Every chip in an orbital data center would operate in a radiation environment that terrestrial hardware was never designed to survive. The SpaceX filing is exclusively for low Earth orbit (LEO), due to the added launch cost, networking challenges, and harsher conditions of higher regimes like geostationary orbit (GEO). Even in LEO, cosmic rays and solar events continuously bombard silicon, causing “bit flips” that corrupt operations and—in extreme cases—frying a whole chip. Any orbital compute platform would need processors that can withstand these effects, through either hardened-by-design chip architectures or novel shielding materials.

The problem is that the domestic industrial base for this kind of hardware is surprisingly thin. In recognition of this fact, Musk recently floated a “Terafab” in Austin to produce these advanced chips for SpaceX and just listed openings for engineers at the proposed facility. Indeed, in 2025, the entire global radiation-hardened electronics market was valued at roughly $2 billion, which is a rounding error in the $600 billion-plus broader semiconductor industry. The Department of War’s Trusted Foundry program, which guarantees access to secure domestic fabrication for sensitive military applications, covered only 2 percent of military chip purchases as of 2021.

The reason is simple: demand. The Department of War tried to stimulate the industry by growing its microelectronics budget to over $1 billion in 2024 in areas where private foundries “lack the incentives or scale to develop solutions independently,” including radiation-hardened chips. Still, the military needs radiation-hardened chips in quantities of hundreds to thousands—simply not enough to sustain the production lines that a healthy industry requires. An orbital data center constellation would totally change the arithmetic. SpaceX would need domestically produced radiation-tolerant processors at a volume that would, for the first time, create a commercial-scale demand signal to build out American industrial capacity. Companies like Cosmic Shielding Corporation, whose Pentagon contracts currently max out at a meager $4 million, would see a qualitatively transformed demand signal, and their expanded capacity would benefit both private and military use cases.

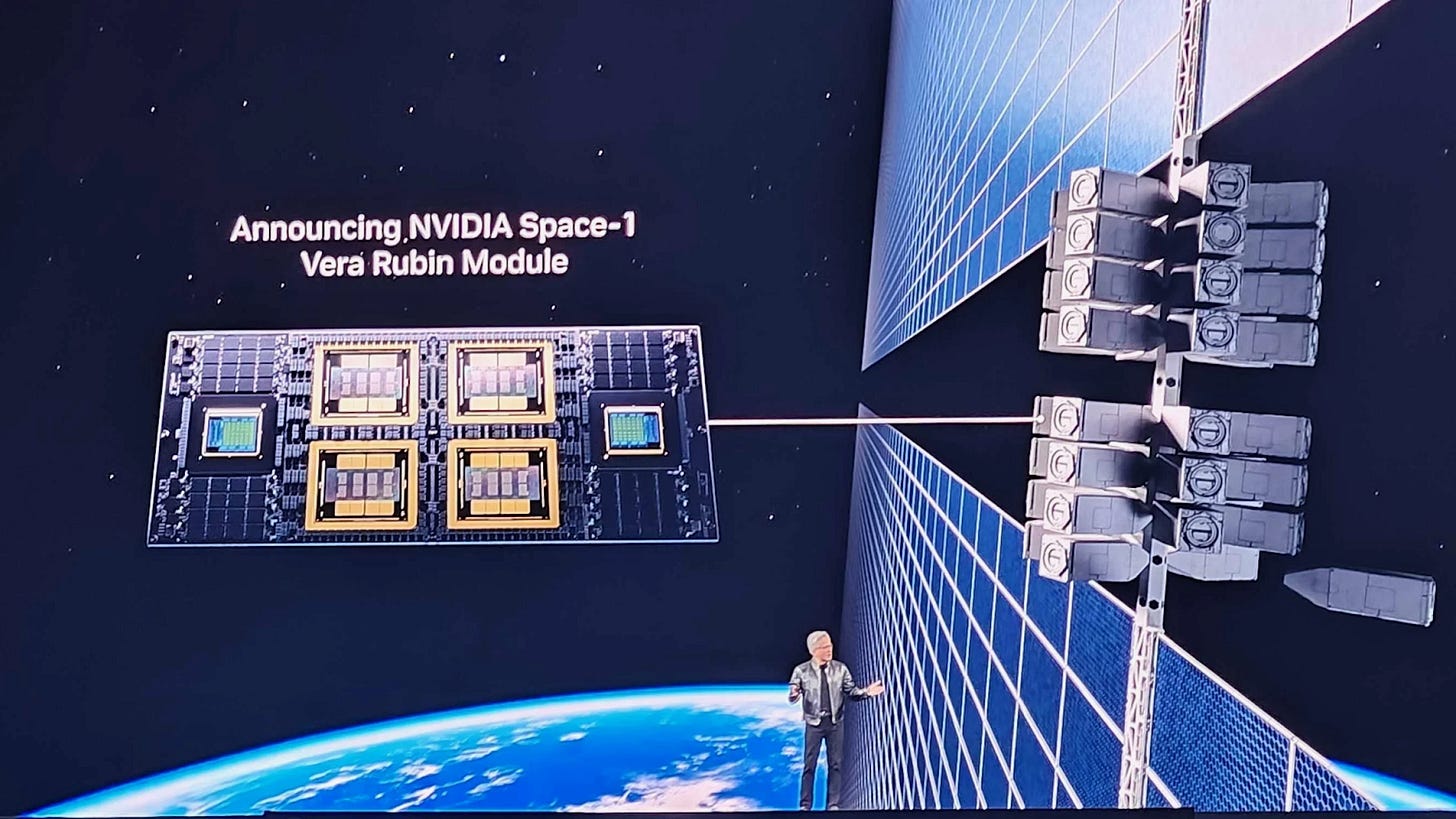

Just weeks from Musk’s declaration, we can already see the infancy of a crowding-in effect. On February 25, Nvidia CEO Jensen Huang seemed skeptical on the economics of orbital data centers. However, less than three weeks later, Nvidia unveiled plans to build the first major purpose-built GPU module for orbital data centers, which would yield 25 times more compute for space-based inference than the H100 that Starcloud first put in orbit just months earlier. Although it’s unlikely that such a major push into a new vertical was planned in just three weeks, Musk’s aggressive socialization of orbital compute created a permission structure to seriously discuss the breakthroughs that will be needed, galvanizing the world’s most important chipmaker to decide the market was real enough to publicly commit.

Expansion of Low-Level Software Controls and Chip Governance

Once launched, orbital chips cannot be inspected, swapped, or physically patched. That constraint would push more of a chip’s operating logic into updatable firmware and low-level control software, making remote updates one of the only ways to improve or constrain device behavior after deployment.

This expansion in the reach of low-level software would matter not only for efficiency but for security and governance. In orbit, remote updates would become one of the few tools left to retune device behavior, refine low-level scheduling, and improve fault recovery without replacing the chip. For national security, the strategic spillover is that the same software-mediated update paths can make chips more governable after deployment, especially in U.S. export-controlled or restricted settings. Low-level software has already been used for compute governance. In 2021, Nvidia announced a new RTX 3060 driver that would hamstring performance by 50 percent if Ethereum cryptocurrency mining was detected (in a plot twist of cat-and-mouse, users later jailbroke this limitation using a leaked beta driver). This episode didn’t demonstrate omnipotent vendor control—that’s a good thing. But it did highlight that AI accelerators are mediated by low-level software layers that can classify workloads, enforce policy, and be revised after sale. Space-based data centers would intensify investment in exactly these capabilities, spawning further feature-rich chips whose operation is mediated by signed firmware, attestation, and policy-controlled updates. On Earth as in orbit, that would give U.S. actors more ways—when necessary for national security—to enforce sanctioned operating modes long after deployment.

Power-Flexible Data Centers

Moving to space would also catalyze a power-flexible regime for data centers, which would address a major challenge at home: helping integrate data centers into the U.S. electric grid. In 30-degree LEO (one of the inclinations requested by SpaceX), satellites circle the planet roughly every 95 minutes, of which 30 are spent in eclipse and thus deprived of solar irradiance. Spacecraft bridge that gap with batteries; that is standard practice today. But powering a compute cluster through every eclipse is daunting: a 1-megawatt facility, for example, would require 500 kilowatt-hours of stored energy to sustain full output through eclipse. Meanwhile, the largest publicly-known battery system in space today is on board the massive ISS and rated at only 360 kilowatt-hours.

This reality would reward a power-flexible model of distributed computing, where a satellite preparing to enter eclipse can throttle down its power, checkpoint workloads for later (temporal shifting), delegate workloads across the constellation (spatial shifting), or combine any of these three, thus avoiding disruption to high-priority, latency-sensitive workloads. The result would be a constellation-scale orchestration. This would feed innovation in terrestrial ventures, which already show promise: in a National Grid trial earlier this month, startup Emerald AI’s software cut the power draw of a 96-GPU Nvidia Blackwell Ultra cluster by more than a third in under 60 seconds without disrupting priority workloads. Orbital data centers would stimulate this market, supercharging the development of intelligent schedulers, distributed computing, and power management.

Hear Us Out. Please.

“Hype” has become a dirty word in technology, and often deservedly so. At its worst, it means fraud and vaporware. But, at its best, it is a refusal to accept that the world as it is exhausts the world as it could be. The most consequential leaps in human capability have never emerged from prudence. They have emerged from the collision of an almost childlike insistence that the frontier is closer than anyone believes with the mature scientific apparatus to actually reach for it. We have fallen into a cultural malaise that treats this kind of ambition as naïveté, a learned cynicism that mistakes the cautious for the wise. America’s quintessential (literal) moonshot—the Apollo program—was pursued on an accelerated timeline that no cost-benefit analysis could justify, driven by a combination of geopolitical urgency and sheer will, and it reshaped the industrial and technological trajectory of a generation.

We are not arguing that Musk’s plan will work. We are arguing that the attempt is worth defending, because the things that must be built along the way are things we need regardless. There is something clarifying about an impossible goal: it reveals, in the striving, capacities that no reasonable objective would ever have called into existence. We should not immediately scoff at our most ambitious builders who, in reaching for something improbable, might deliver what no one else is trying to build.

Super informative, thanks! Would love to see an analysis of how firmware update advancements might affect "rogue ai" concerns from the AI safety and security folks